Or maybe not.

If you are fighting low-quality AI code, or you think the whole thing is just marketing noise, here is a radical idea: fix the environment instead of blaming the tool. Doing AI right is not rocket science. It is mostly common sense. And, as so often, discipline.

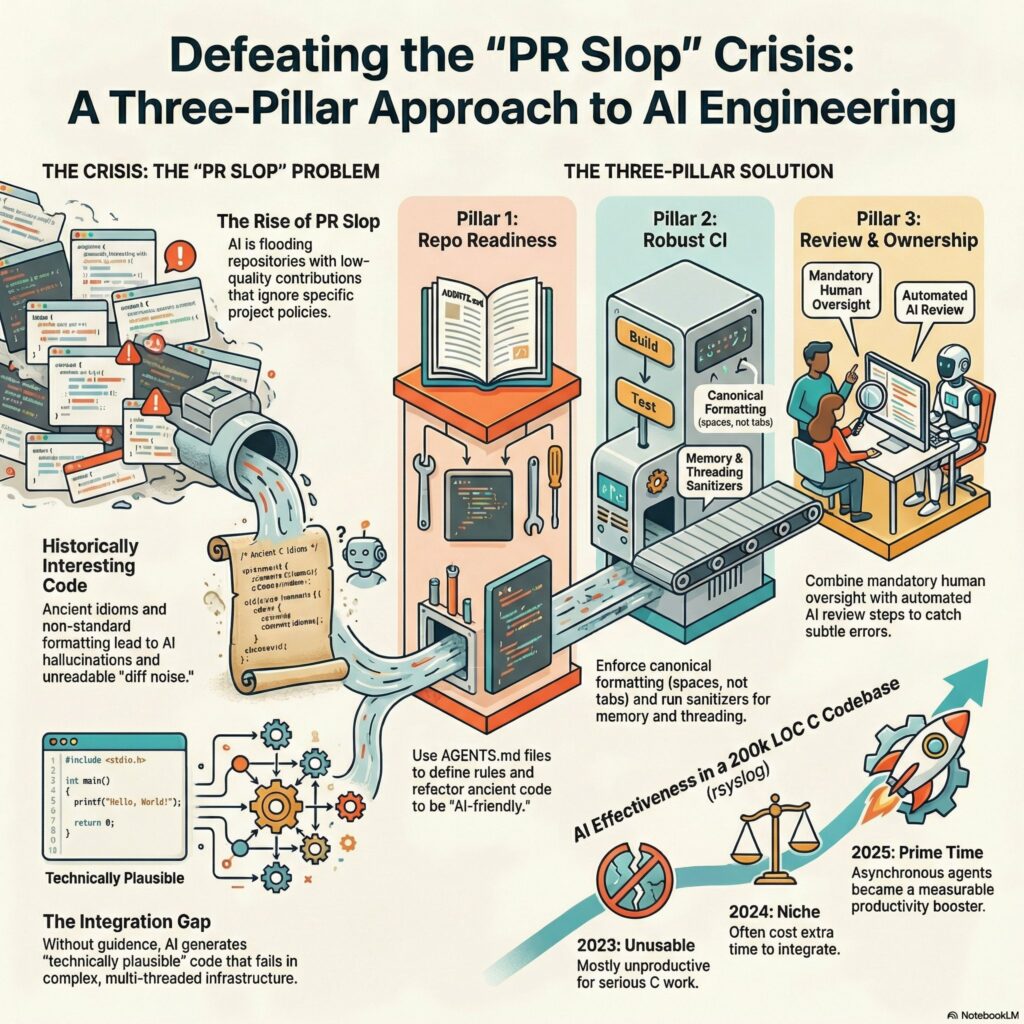

I keep repeating this because it matters. There are three simple pillars that make AI code generation actually work. I can prove it, I do it every day.

1. Make your repository ready

Most problems start here.

If your repository is a random collection of files with tribal knowledge and half-baked comments, the AI will faithfully amplify that chaos.

Repository readiness means:

- Agent documentation. Tell the machine how the project works.

- Good inline documentation. Yes, comments. Real ones.

- Code that follows clear best practices and consistent style.

Garbage in, garbage out did not stop being true just because we added transformers.

When we started with AI on rsyslog, hell, the code base was really not ready. But even after adding a bit of AGENTS.md here and there, things improved. And then we learned what we can further improve to get a) better code and b) better AI generation. And we are still learning.

Guess what? Human developers now also better understand our code. And, honestly, it was not that bad when we began.

2. Install strong guardrails and safeguards

AI can move fast. That is exactly why you need brakes.

- A strong CI pipeline. Always. Really always.

- Unit tests.

- Static analyzers.

- AI review as an explicit step.

- No patch merged without CI passing. None.

CI is the ultimate safeguard, especially with AI-generated code. If you skip it “just this once”, that once will eventually hurt. Even then, something might slip through CI. Nothing is perfect. Human error slipped through CI for decades before AI.

3. Ensure ownership

Tools do not ship software. People do.

- Humans use the tools. Hold them accountable.

- Keep a final human-in-the-loop check.

- Run human review after CI. That alone saves a surprising amount of iteration and wasted cycles.

Human ownership is not a magic fix. In a full human-only process, for decades, we still had bugs. Again: nobody is perfect. But human review often detects when something even smells strange. And with the help of AI in human review phase (for drilling down and asking questions, trying out things), that smell can often easier be pointed to a bug.

The goal is not to remove humans. The goal is to remove unnecessary friction and low-value work.

Follow these three pillars and AI-generated code becomes a productivity multiplier instead of a liability.

If you do not believe it, watch what we are doing with rsyslog. It is all out in the open. Commits, CI, reviews, the good parts and the mistakes.

No hype. Just engineering.

Do not be shy of AI. By shy of laziness.