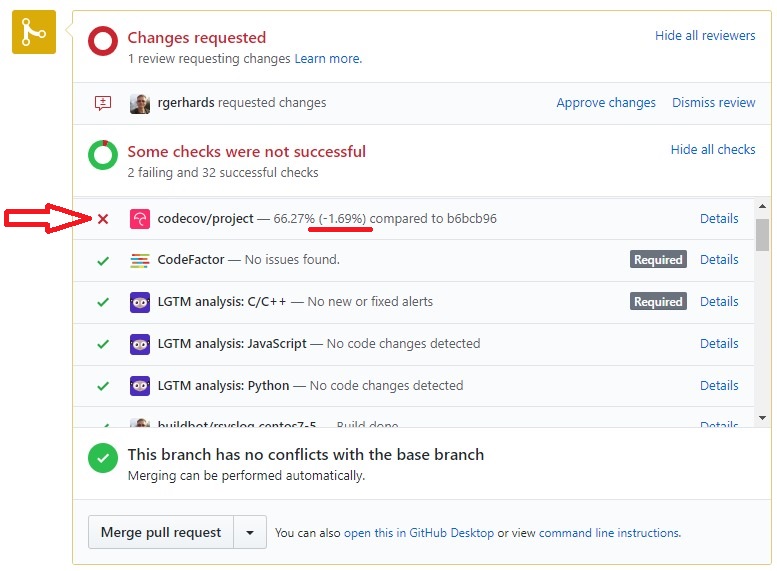

We use the CodeCov Tool inside rsyslog CI processing.Obviously, this can cause CI failures, which then look similar to these:

This sometimes triggers questions from contributors on what to do against it.

Let’s start with where the status originates from. In essence, CodeCov displays statement coverage. That it is, it tells which statements (lines) of code have been touched by dynamic tests. So it is a good indication of how good the dynamic tests are – and which may be missing.

It is important to understand that CodeCove is “just” a front-end: the heavy hauling is done by the rest of the CI system. Actual coverage information is gathered by compiler instrumentation (-coverage switch) and the execution of the testbench. We have some runs inside CI that have these special instrumentation and report the resulting coverage to CodeCov. CodeCov than takes the raw information and generates the nice reports out of it. Also CodeCov checks how the PR affects overall coverage. If it stays or goes up, all is well. If it goes down, it emits the above warning message. What to do in the latter case?

It depends:

- If you added new modules, the number one failure cause is that you did not write any tests for them. You really should do that. At least some basic ones. So the cure is to add tests as well. For project-supported modules, we require at least a base set of tests. For contributed code, you can go away without tests, if there is really no way to add them with decent effort. However, this means it will be unable to become project-supported in the longer term.

- If you enhanced existing modules, you probably wrote new code but no tests. Much the same case as for new modules. Depending on what you changed, we may reject contributions without tests. Or we may not — this is up to circumstances.

- If you fixed something or did a little change, coverage can be affected because of side-effects. This will be checked by a maintainer and we will let you know how to proceed.

- Note that due to some timing constraints and flakiness in support systems (like kafka) as well as some yet-unresolved issues with known-problematic tests, coverage can slightly drop even without you being the root cause at all. We have configured CodeCove to show some tolerance for that. However, if things go really bad, you may still see a false positive warning.

Note that all PRs are also manually reviewed. As part of this, we routinely look into CI failures and make sure they reveal actual issues. We provide advise to each contributor and especially are happy to help you get going with tests.